Staying ahead in today’s fast-moving tech landscape means understanding not just what’s new, but what’s next. If you’re searching for clear, actionable insights on emerging technologies—from machine learning breakthroughs to quantum computing risks—you’re in the right place. This article is designed to cut through the hype and deliver practical analysis you can actually use.

We examine real-world developments in AI systems, evolving app development strategies, and the growing security implications of quantum advancements. You’ll also gain a deeper understanding of how to design, deploy, and maintain a production ml pipeline that performs reliably at scale.

Our insights are grounded in continuous monitoring of tech innovation alerts, hands-on evaluation of development frameworks, and careful review of peer-reviewed research and industry case studies. By the end, you’ll have a clearer view of emerging trends, their real-world impact, and how to position your projects or organization to stay competitive.

From Notebooks to Scalable Systems: The Production ML Blueprint

A brilliant model inside a notebook is just an experiment. A production ml pipeline turns that experiment into a reliable system real users depend on. In simple terms, “production” means software that runs automatically, handles errors, and scales without breaking.

Think of it like moving from a kitchen test recipe to a restaurant kitchen.

Key components include:

- Data ingestion: automated data collection

- Model training: repeatable, versioned workflows

- Deployment: serving predictions via APIs

- Monitoring: tracking accuracy and drift

Without these pieces, models stall in research instead of delivering measurable business impact consistently.

Step 1: Automating Data Ingestion and Validation

To ensure your machine learning models move from data to deployment smoothly, it’s essential to integrate best practices that are explored in depth in our article ‘Oxzep7‘.

A reliable data pipeline starts with a single truth: if your input is unstable, everything downstream wobbles. So first, connect directly to structured sources like data warehouses (e.g., Snowflake), REST APIs, or object storage such as S3. Automate ingestion with scheduled jobs or event triggers to avoid manual uploads (because spreadsheets emailed at midnight are not a strategy).

Garbage In, Garbage Out

Next, add validation checks before data enters your production ml pipeline. Automatically verify schema consistency, enforce data types, and flag null spikes. For example, if a numeric “price” field suddenly contains text, halt the run and alert your team. Tools like Great Expectations (https://greatexpectations.io/) make this straightforward.

Then, monitor for data drift and concept drift. Compare incoming distributions against training baselines using statistical tests like KS tests. If customer behavior shifts significantly, retrain before performance silently degrades (think Netflix recommendations gone rogue).

Pro tip: Log every validation failure for trend analysis later.

Step 2: Engineering Reusable and Consistent Features

The Transformation Layer is where raw data becomes model-ready signal. This means scaling numerical values, encoding categorical variables, and creating interaction terms (new features formed by combining two or more variables to capture relationships). For example, multiplying “price” by “demand” can reveal revenue sensitivity patterns a single column never could. Think of it as upgrading ingredients before they enter the recipe.

Here’s the competitive edge most teams miss: build this as a dedicated, version-controlled component inside your production ml pipeline. Not scattered notebooks. Not copy-pasted scripts. A SINGLE SOURCE OF TRUTH.

Why? Because training-serving skew is the silent killer of ML systems. This happens when transformations applied during model training differ—even slightly—from those used in real-time inference. A minor mismatch in scaling logic can tank performance overnight (yes, it happens more than teams admit).

Enter the Feature Store: a centralized repository for validated, reusable features.

• Ensures CONSISTENCY across models

• Enables point-in-time correctness for training datasets

• Reduces duplicated engineering effort

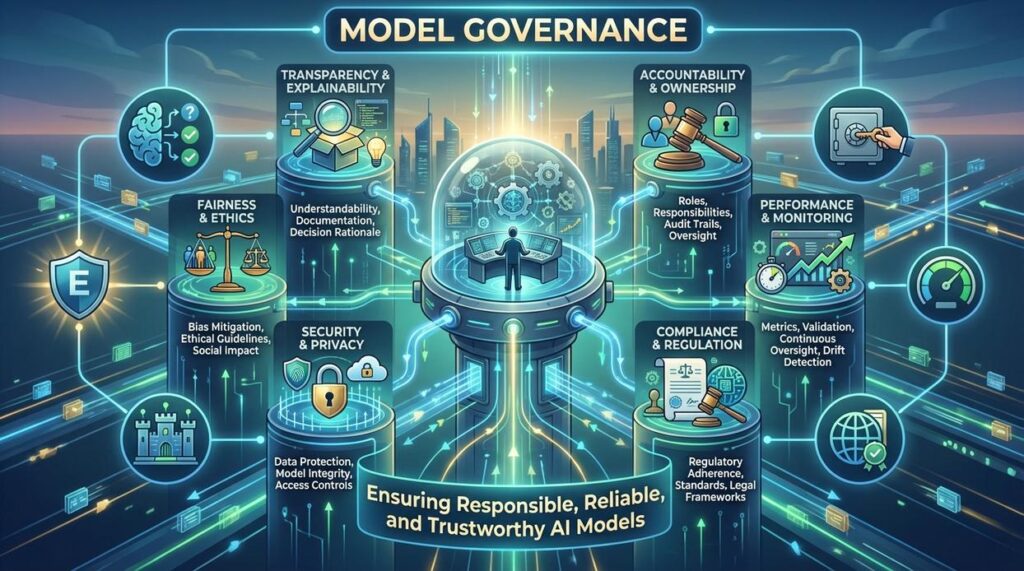

Some argue feature stores add complexity. Fair. But without them, governance and even discussions around ethical challenges in machine learning and how to address them become harder to enforce at scale.

Step 3: Systematizing Model Training and Versioning

Beyond a quick model.fit() call, model training should be treated like an engineering assembly line, not a science fair project. A repeatable process means every run follows the same scripted steps—data ingestion, preprocessing, training, evaluation—triggered automatically through CI/CD or scheduled jobs. Think less “garage startup montage” and more Tony Stark’s lab: precise, automated, and logged.

This is where experiment tracking comes in. Every training run should record:

- Code version (the exact commit hash)

- Data version (snapshot or dataset ID)

- Hyperparameters (learning rate, batch size, architecture choices)

- Performance metrics (accuracy, F1, AUC, loss curves)

Without this, you’re basically trying to remember which Stranger Things season had which monster—possible, but messy. Logging creates accountability and reproducibility (two words investors and engineers both love).

Next is the model registry—the single source of truth for production-ready models. A model registry stores artifacts (model binaries, metadata), assigns version numbers, and tracks lineage from raw data to deployed endpoint. In a production ml pipeline, this ensures you know exactly what’s running in production and why.

Pro tip: never deploy a model that isn’t registered and versioned. If you can’t trace it, you can’t trust it.

Step 4: Robust Model Evaluation and Deployment Strategies

Robust evaluation is where MANY machine learning initiatives quietly fail. Offline evaluation tests a model against a static holdout dataset, while online evaluation measures performance on live user traffic. Both matter. A 2023 Google study found that up to 60% of models that perform well offline degrade in production due to data drift and user behavior shifts.

Offline vs. Online: What the Data Shows

Offline metrics (like accuracy, F1-score, or RMSE) validate statistical fit. Online metrics tie directly to BUSINESS IMPACT—conversion rate, churn reduction, or latency.

| Evaluation Type | Environment | Key Metrics | Risk Level |

|---|---|---|---|

| Offline | Static dataset |

Accuracy, F1, RMSE | Low |

| Online | Live production | CTR, Revenue, Latency | Higher |

Some argue strong offline performance is enough. Evidence says otherwise. Netflix reported measurable engagement gains only after controlled online A/B validation.

Automated promotion in a production ml pipeline compares a newly trained “challenger” against the current “champion.” If the challenger exceeds predefined thresholds on holdout data, it is auto-promoted.

Safe deployment patterns reduce exposure. Shadow deployment runs predictions silently. Canary releases send 5–10% of traffic to the new model. A/B testing measures statistically significant differences (pro tip: require p < 0.05 before scaling). These safeguards turn experimentation into controlled progress—not guesswork.

Automating for Impact: Your Next Steps in MLOps

Intent Fulfillment: A structured pipeline bridges the gap between experimentation and dependable deployment. According to a 2023 Gartner report, over 80% of machine learning projects fail to reach production, largely due to manual, inconsistent processes. Automation changes that.

The Core Pain Point Revisited: Human-driven handoffs invite errors, version drift, and late-night fire drills (the kind that make dashboards feel like horror movies). By replacing fragile steps with automated validation, testing, and monitoring, teams create RESILIENT systems that deliver consistent value. A case study from Google Cloud found that CI/CD-driven ML workflows reduced deployment errors by 60%.

Why This Works: Component-based design enables modular debugging, reproducibility, and horizontal scaling. Each stage feeds the next, forming a production ml pipeline that adapts as data grows.

Call to Action: Start small. Automate data validation first; measure defect rates, track time saved, then expand. Momentum compounds.

Stay Ahead of Disruption Before It Costs You

You came here to understand how emerging tech trends, machine learning breakthroughs, quantum computing risks, and modern app development strategies are reshaping the landscape. Now you have a clearer picture of what’s changing, what’s accelerating, and what demands your attention.

The real challenge isn’t access to information — it’s keeping up before competitors do. Falling behind on AI advancements, ignoring quantum security implications, or failing to optimize your production ml pipeline can quietly erode your edge. In fast-moving tech environments, delay is expensive.

The smartest move now is action. Audit your current systems. Stress-test your security assumptions. Optimize your production ml pipeline for scale and resilience. And most importantly, stay consistently informed about what’s coming next.

If staying ahead of disruptive tech feels overwhelming, plug into a trusted source delivering real-time innovation alerts, deep machine learning insights, and practical development strategies. Thousands of forward-thinking builders and tech leaders rely on timely updates to make smarter decisions.

Don’t wait until disruption forces your hand. Get the insights you need, apply them fast, and position yourself ahead of the curve today.

is the kind of writer who genuinely cannot publish something without checking it twice. Maybe three times. They came to app development techniques through years of hands-on work rather than theory, which means the things they writes about — App Development Techniques, Tech Innovation Alerts, Pro Perspectives, among other areas — are things they has actually tested, questioned, and revised opinions on more than once.

That shows in the work. Drevian's pieces tend to go a level deeper than most. Not in a way that becomes unreadable, but in a way that makes you realize you'd been missing something important. They has a habit of finding the detail that everybody else glosses over and making it the center of the story — which sounds simple, but takes a rare combination of curiosity and patience to pull off consistently. The writing never feels rushed. It feels like someone who sat with the subject long enough to actually understand it.

Outside of specific topics, what Drevian cares about most is whether the reader walks away with something useful. Not impressed. Not entertained. Useful. That's a harder bar to clear than it sounds, and they clears it more often than not — which is why readers tend to remember Drevian's articles long after they've forgotten the headline.

is the kind of writer who genuinely cannot publish something without checking it twice. Maybe three times. They came to app development techniques through years of hands-on work rather than theory, which means the things they writes about — App Development Techniques, Tech Innovation Alerts, Pro Perspectives, among other areas — are things they has actually tested, questioned, and revised opinions on more than once.

That shows in the work. Drevian's pieces tend to go a level deeper than most. Not in a way that becomes unreadable, but in a way that makes you realize you'd been missing something important. They has a habit of finding the detail that everybody else glosses over and making it the center of the story — which sounds simple, but takes a rare combination of curiosity and patience to pull off consistently. The writing never feels rushed. It feels like someone who sat with the subject long enough to actually understand it.

Outside of specific topics, what Drevian cares about most is whether the reader walks away with something useful. Not impressed. Not entertained. Useful. That's a harder bar to clear than it sounds, and they clears it more often than not — which is why readers tend to remember Drevian's articles long after they've forgotten the headline.